One-Shot Trauma: When Reinforcement Learning and Human Minds Overcorrect

My experience with choking taught me more about the limits of AI than any textbook could.

The Day My Internal Agent Received a -1,000,000 Penalty

It only took a second to rewire my brain.

By early 2022, I was just your average joe, living life day by day. Eating was one of my daily tasks that was necessary, automatic, and unconscious. It was a simple background task that required no conscious thought. Then, one day, I failed on that task that was supposed to be automatic. I choked on my food.

It wasn't just a moment of discomfort, it was a primal, terrifying alert that flooded my entire system. The world narrowed to the single, desperate need for air. My heart hammered against my ribs, adrenaline surged, and in that moment, my brain registered a single, blaring data point: This is death. This is how you die.

Even after the danger passed, the damage was done. For weeks and months, I had a debilitatingly difficult time eating solid foods. Every sensation in my throat was a potential prerequisite to a disaster, triggering panic attacks that, in a cruel feedback loop, caused GERD, which in turn created more throat sensations.

What I didn’t realize at the time was that my brain was running a perfect, albeit terrifying, simulation of a core problem in artificial intelligence. I had become a reinforcement learning agent that had just received a penalty so massive, so disproportionate to all my previous experiences, that my entire operating policy had been corrupted.

A Crash Course in Reinforcement Learning

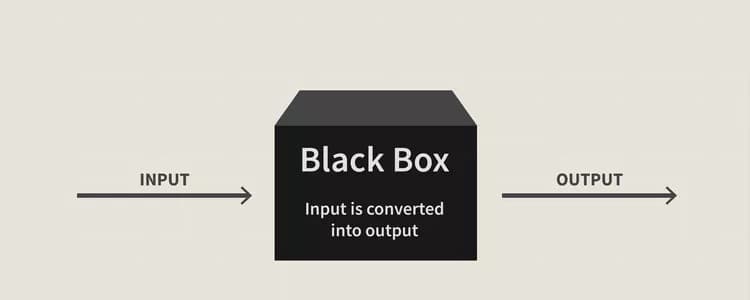

Before we get to the catastrophe, let’s quickly define the terms. Reinforcement Learning (RL) is a field of AI where we teach an "agent" to make decisions. Think of it like training a dog, but with algorithms.

The basic components are simple:

The Agent: The learner and decision-maker (the AI, the dog, or in my case, me).

The Environment: The world the agent operates in (a video game, a maze, or the dinner table).

The State: A snapshot of the agent's current situation ("I am at a crossroad," "My plate is full of solid food").

The Action: Something the agent can do ("Turn left," "Take a bite").

The Reward/Penalty: The feedback the agent gets from the environment after an action (+1 for finding cheese, -1 for hitting a wall).

The agent’s goal is to learn a policy, a strategy or a map of which actions to take in which states to maximize its total cumulative reward over time. It does this through trial and error, gradually updating its policy as it explores the world. For 99.9% of its life, this process is gradual and iterative.

The Catastrophe

Now, let's return to our RL agent. It’s happily exploring its environment, collecting small rewards: +1, +5, +2. Its policy is getting better and better.

Then, it wanders into an unknown territory and takes an action. The environment's response isn't a small penalty. It's a catastrophic, system-shocking -1,000,000.

From a technical standpoint, the value assigned to that state-action pair plummets. The agent's algorithm, designed to maximize reward, now sees any path leading to that state as unimaginably bad. The policy updates instantly and brutally: "Whatever you do, never go there again."

This is precisely what happened in my brain.

State: "Eating solid food."

Action: "Swallowing."

Penalty: The choking experience, a neurological -1,000,000.

My internal policy was updated in a flash. The value of that action became catastrophic. My brain’s simple new rule was: Avoid this state at all costs. It is not worth the risk.

Reinforcement learning agents reacts to a huge penalty as much as humans react to real life traumas. Some human traumas are key to survival and most of it were gained through evolution. Humans were designed to have panic attacks during moments in the wild, for example when we encounter lions or other predators in the forest, but this part of our brain weren’t optimized for the modern human experience.

The Flawed Policy and The Dragon of Chaos

This is where a core AI challenge, the Exploration-Exploitation Dilemma, collides with human psychology. An agent must balance exploiting known good strategies with exploring new ones to find even better rewards.

After a catastrophic penalty, this dilemma is shattered. The agent stops exploring. It retreats into a tiny, "safe" corner of its world, only performing actions it knows won't lead to disaster. It has sacrificed growth and opportunity for the illusion of total safety.

This is where the ideas of psychologist Jordan Peterson become incredibly relevant. Peterson often frames the world as a duality of Order and Chaos.

Order is the realm of the known, the predictable, the safe. It's your home, your routine, your settled knowledge.

Chaos is the unknown, the unexpected. It is the place of both terrifying dragons and undiscovered treasure.

My normal life of eating was Order. The choking incident was a violent, sudden immersion into Chaos. My response, my agent's response, was to retreat and drastically shrink the walls of my known, safe Order. Solid food, a previously mundane part of Order, was now re-categorized as Chaos. It was a territory on my internal map suddenly marked, "Here be dragons."

But here's the flaw in the policy, for both me and the AI: you can’t just skip eating. The AI agent, by walling off a huge part of its environment, might be dooming itself to a sub-optimal existence, missing out on the vast rewards that lie just beyond that one terrifying spot.

A perfect reinforcement learning agent (as well as the perfect human life experience) consists of a balance between a good amount of order, and a few bits of chaos. It is in order that we find peace in the world, and in facing chaos that we learn how to adapt to the ever changing world. A reinforcement learning agent, and a human, would paradoxically be unsafe in a perfectly ordered environment because it will never be prepared for chaos.

Recalibration

So, how do you fix a policy that has been broken by a single, traumatic data point? You can't just delete the memory. The agent, and the human, needs new countervailing data.

Peterson's prescription for this is not to ignore Chaos, but to confront it voluntarily. You don't wait for the dragon to find you again. You approach its lair on your own terms, in small, manageable steps.

In psychology, this is the foundation of exposure therapy. For me, it meant I couldn't go back to eating a steak dinner. But I could start with something soft. I could eat a piece of well-chewed bread. I was voluntarily taking a small step back into the "dangerous" territory. I was telling my internal agent, "See? We took an action in this state-space, and the penalty was 0, not -1,000,000."

Each successful, non-choking bite was a small, positive reward (+1) that began to slowly, painstakingly, update my flawed policy.

We can apply this same logic to building more resilient AI:

Curriculum Learning: Don't throw the agent into the most chaotic environment at once. Start it in a simple, safe version and gradually increase the complexity, the AI equivalent of starting with soft foods.

Reward Shaping: Can we design systems that give small rewards for "bravery", for cautiously re-exploring a territory with a known high penalty? This encourages the agent not to write it off forever.

Decaying Memory: Perhaps the memory of a massive penalty shouldn't be permanent. It could slowly decay over time if not reinforced, allowing the agent to become cautiously curious once more.

At first, I had just eating soft foods starting with oatmeal and yogurt. I then eventually tried to eat sandwiches, before I fully tried eating meat with rice. It was a such an experience I never knew I will encounter. I monitored progress every step of the way and gave myself a pat on the back whenever I faced a fear I was very hesitant to do at first.

Conclusion: Building Agents with Digital Courage

My experience taught me that humans and our most advanced learning algorithms share a fundamental vulnerability: we are profoundly shaped by our worst moments. A single, catastrophic failure can create a brittle, over-cautious policy that prioritizes avoiding pain over seeking growth.

The path to recovery and optimal performance, for both man and machine, isn't about erasing that bad memory. It’s about courageously and methodically gathering new data to prove that the catastrophe was an outlier, not the rule.

Perhaps the next frontier in AI isn't just about bigger models or faster processing. It’s about instilling the digital equivalent of courage, the ability to face the remembered dragon, learn from failure, and refuse to let a single scar define the entire map of one's world.

At this some point, the technical knowledge in AI (reinforcement learning) is determined by how we imitate lessons and occurrences from psychology. Learning comes from a good amount of order to stand on, and a small amount of chaos to learn from.